All Issues

No-tillage and high-residue practices reduce soil water evaporation

Publication Information

California Agriculture 66(2):55-61. https://doi.org/10.3733/ca.v066n02p55

Published online April 01, 2012

Abstract

Reducing tillage and maintaining crop residues on the soil surface could improve the water use efficiency of California crop production. In two field studies comparing no-tillage with standard tillage operations (following wheat silage harvest and before corn seeding), we estimated that 0.89 and 0.97 inches more water was retained in the no-tillage soil than in the tilled soil. In three field studies on residue coverage, we recorded that about 0.56, 0.58 and 0.42 inches more water was retained in residue-covered soil than in bare soil following 6 to 7 days of overhead sprinkler irrigation. Assuming a seasonal crop evapotranspiration demand of 30 inches, coupling no-tillage with practices preserving high residues could reduce summer soil evaporative losses by about 4 inches (13%). However, practical factors, including the need for different equipment and management approaches, will need to be considered before adopting these practices.

Full text

Improving water use efficiency is an increasingly important goal as California agriculture confronts water shortages. Changing tillage and crop residue practices could help.

Crop residues are an inevitable feature of agriculture. Because no harvest removes all material from the field, the remaining plant matter, or residue, accumulates and is typically returned to the soil through a series of mixing and incorporating operations involving considerable tractor horsepower (Upadhyaya et al. 2001), an array of tillage implements (Mitchell et al. 2009) and cost (Hutmacher et al. 2003; Valencia et al. 2002).

Managing residues to essentially make them disappear is the norm in California.

Conservation tillage allows growers to plant directly into fields that contain residue from prior crops. Above, tomatoes are transplanted into cover crop residues (triticale, rye and pea) in Five Points.

Concerns about crop pathogens are exacerbated when organic materials accumulate on the soil surface (Jackson et al. 2002), and farmers believe that they need “clean” planting beds to make the seeding and establishment of subsequent crops easier and efficient. Residue management practices in California are also influenced by tradition; until recently, they had not changed significantly for 70 years (Mitchell et al. 2009).

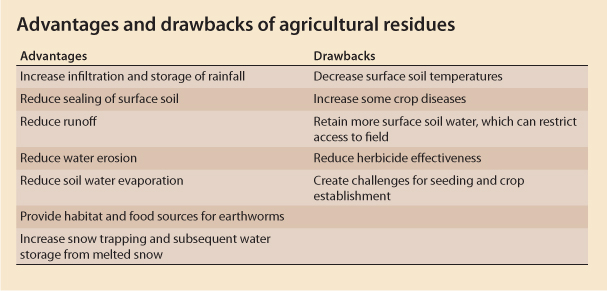

In regions of the world where no-tillage systems are common — such as Brazil, Argentina, Paraguay, Canada, Western Australia, the Dakotas and Nebraska — generating and preserving residues are an indispensable part of management and major, even primary, goals of sustainable production (Crovetto 1996, 2006). Value is derived from residues in several ways: they reduce erosion (Shelton, Jasa et al. 2000; Skidmore 1986), provide carbon and nitrogen to soil organisms (Crovetto 2006) and reduce soil water evaporation (Klocke et al. 2009; van Donk et al. 2010), along with other advantages and drawbacks (see box, page 56).

Residue amounts vary widely in cropping systems (Mitchell et al. 1999; Unger and Parker 1976). While the weight of the residues may be important, most often the percentage of soil cover or the thickness of residues is used in assessing or distinguishing their benefits (Shelton, Smith et al. 2000; USDA NRCS 2008). From research back in the Dust Bowl era, soil scientists developed relationships between the amount and architecture of residues, including crop stubble, and the reductions in soil loss due to wind (Skidmore 1986) and water (Shelton, Jasa et al. 2000). Over time, 30% or more residue cover was associated with significant reductions in soil loss, and this level of cover became an important management goal in areas where soil loss was a problem, such as the Great Plains, Pacific Northwest and southeastern United States (Hill 1996). Eventually, 30% cover became the target linked to the definition of conservation tillage and also to the residue management technical practice standard that the U.S. Department of Agriculture's Natural Resources Conservation Service has used for decades to evaluate conservation management plans (USDA NRCS 2008).

Glossary

Conservation tillage: As defined by the Conservation Agriculture Systems Initiative, a wide range of production practices that deliberately reduce primary intercrop tillage operations such as plowing, disking, ripping and chiseling, and either preserve 30% or more residue cover (as in the classic Natural Resources Conservation Service definition) or reduce the total number of tillage passes by 40% or more relative to what was customarily done in 2000 (Mitchell et al. 2009).

Conventional, or traditional, tillage: The sequence of operations most commonly or historically used in a given geographic area to prepare a seedbed and produce a given crop (MPS 2000).

No-tillage, or direct-seeding: Planting system in which the soil is left undisturbed from harvest to planting, except perhaps for the injection of fertilizers. Soil disturbance occurs only at planting by coulters or seed disk openers on seeders or drills (Mitchell et al. 2009).

Residues: Plant materials remaining on land after harvesting a crop for its grain, fiber, forage and so on (Unger 2010).

Strip-tillage: Planting system in which the seed row is tilled prior to planting to allow residue removal, soil drying and warming and, in some cases, subsoiling (Mitchell et al. 2009).

Soil water evaporation

Crop residues reduce the evaporation of water from soil by shading, causing a lower surface soil temperature and reducing wind effects (Klocke et al. 2009; van Donk et al. 2010). A number of studies from both irrigated and rain-fed regions around the United States where no-tillage is used have reported annual irrigation savings of as much as 4 to 5 inches (10 to 13 centimeters) (Klocke et al. 2009). Crop residues are left in the field under mechanized overhead irrigation systems. When irrigation wets the soil surface, evapotranspiration (ETc), which is the combination of transpiration and soil water evaporation, occurs. Transpiration, water moving into and through crop plants to the atmosphere, is essential for growth and crop production. Soil water evaporation, on the other hand, is generally not useful for crop production, although it does slightly cool the crop canopy microenvironment (Klocke et al. 2009).

Two processes govern soil water evaporation. When the soil is wet, evaporation is driven by radiant energy reaching the soil surface; this is called the energy-limited phase. Once the soil dries, evaporation is governed or limited more by the movement of water in the soil to the surface; this is the soil-limited phase. Subsurface drip irrigation, which typically keeps the soil surface dry, generally greatly reduces soil water evaporation (Allen et al. 1998). Irrigation systems such as furrow and overhead that frequently leave the soil surface wet can result in an evaporation loss of about 30% of total crop evapotranspiration (Klocke et al. 2009), depending on irrigation frequency.

At Kansas State University's Southwest Research and Extension Center, near Garden City, Kansas, full-surface residue coverage with corn stover and wheat stubble has been shown to reduce evaporation by 50% to 65% compared to bare soil with no shading (Klocke et al. 2009). The type of residue, though, is important, as residues from crops such as cotton and grain sorghum, which produce less material, would need to be concentrated to impractical levels to achieve evaporation decreases comparable to those obtained by typical residues from irrigated wheat (Unger and Parker 1976).

Converting to no-tillage has also been shown to reduce irrigation water needs because soil water evaporation is reduced (Pryor 2006). Conventional intercrop tillage typically involves a number of tillage passes; this is the case, for example, in the spring between winter wheat or triticale and corn seeding in San Joaquin Valley dairy silage production systems, or virtually any conventional crop rotation in which spring tillage is performed (Mitchell et al. 2009). Research in Nebraska has shown that these tillage operations dry the soil before planting to the depth of the tillage layer and that typically 0.3 to 0.75 inch (0.8 to 1.9 centimeters) of soil moisture may be lost per tillage pass (Pryor 2006). In Nebraska, switching from conventional tillage to no-tillage under center-pivot irrigation has been shown to save 3 to 5 inches (8 to 13 centimeters) of water annually, with an added savings of $20 to $35 per acre from pump costs (Pryor 2006). Water savings of 8 inches (20.3 centimeters) annually have been documented when conventional tillage under furrow irrigation was converted to no-tillage under overhead irrigation.

The water conservation value of crop residues and conservation tillage (Mitchell et al. 2009) has not been evaluated in the warm, Mediterranean climate of California. The objective of our study was to determine the effects of residues and no-tillage on soil water evaporation in California conditions.

Tillage studies

To determine the effects of intercrop tillage on soil water storage, we conducted studies in 2009 and 2010 at the UC West Side Research and Extension Center in Five Points. We monitored the surface water content in a Panoche clay loam soil during the transition from wheat harvest to corn seeding under no-tillage and standard tillage. Each treatment plot consisted of fifteen 5-foot-by-300-foot beds and was replicated three times in a randomized complete block design. Following wheat silage harvest in late April of each year, the no-tillage plots were left undisturbed, while the standard tillage plots were disked twice, chiseled to an approximate depth of 1 foot and disked again before being listed to recreate 5-foot-wide planting beds for corn.

Surface soil water content in the top 0 to 5 inches and 0 to 8 inches (0 to 12 and 0 to 20 centimeters) of soil was monitored during this transition between crops, using time-domain reflectrometry (TDR) (Hydrosense, Campbell Scientific, Logan, UT) instrumentation that had been calibrated for the experimental soil and gravimetric water content techniques. Water content sampling consisted of about 12 TDR readings made in the outer 6 inches of randomly selected bed tops in each plot and four to six 3-inch-diameter soil cores collected in similar areas and composited for each gravimetric water content measurement. Soil bulk density was determined at the start of each study.

To account for possible changes in soil bulk density resulting from standard tillage, two 3-inch-diameter soil cores per plot were collected, dried and weighed following the disking operations. These density determinations were then used with the gravimetric water content measurements to calculate soil volumetric water content (SVWC). Percentages of wheat straw and corn stover residue cover were determined using the line-transect method (Bunter 1990).

A 2-year study at the UC West Side Research and Extension Center in Five Points compared soil water content in tilled (right, subsoil ripped) and no-tillage (left) plots.

Residue studies

The effects of wheat straw residues on soil water evaporation were determined in one study in 2009 and two studies in 2010. These studies were also conducted in a Panoche clay loam soil at the UC West Side Research and Extension Center. Before each study, the field was prepared by disking, land planing and ring rolling to create uniform and level conditions throughout. Soil in the entire experimental field had been similarly managed before each study in terms of previous cropping and tillage. Residue and bare-soil treatment plots measured 65.8 by 75.1 feet and were replicated four times in a randomized complete block design.

The residue plots were established by manually placing wheat straw on them to an approximate height of 4 inches (10 centimeters); the straw was collected from a uniform crop that had been grown and chopped as for silage in the study field before the start of each study. An overhead, hose-fed, eight-span, lateral-move irrigation system (Model 6000, Valmont Irrigation, Valley, NE) fitted with Nelson (Walla Walla, WA) pressure-regulated nozzles at 48 inches above the soil surface and 5-foot spacing was used to apply 2.5 inches (6.4 centimeters) of water to each plot in the 2009 study and 1.2 inches (3.0 centimeters) to each plot in the 2010 studies. This system's nozzle and hose configurations provided Christensen application uniformities (CUs) of 93%.

Surface soil water content (in the top 0 to 5 and 0 to 8 inches of soil) was monitored daily, using TDR and gravimetric water content techniques. In 2009, monitoring was done for 14 days before irrigation and 7 days after, and in 2010, monitoring was done only for 7 days after. Each daily sampling consisted of about 15 TDR readings collected along both sides of two transects in each plot and four soil cores 4 inches (10 centimeters) in diameter taken in similar areas of each plot and composited for gravimetric water content measurements. Soil bulk density measurements were made for each study using the compliant cavity method (USDA NRCS 1996).

Aboveground air temperatures (1 meter above the soil surface) and soil temperatures at 0.4, 3.4 and 7.9 inches (1, 10 and 20 centimeters) below the soil surface were determined every 15 minutes during the second study in 2010, using HOBO Pro v2 data-logging sensors (Spectrum Technologies, IL). Percentage canopy cover was determined by placing a LI-191 Line Quantum Sensor (LI-COR, Logan, UT) in full sun and then below the residue at six locations in each residue plot and calculating the amount of photosynthetically active radiation that had been intercepted by the residue.

Less evaporation with no-tillage

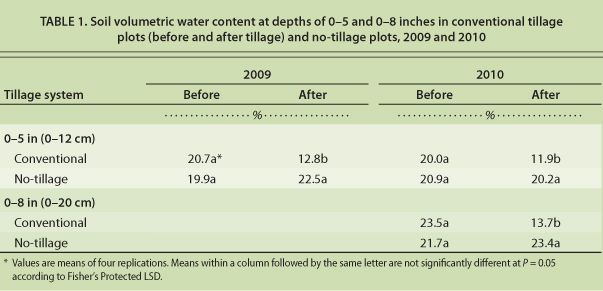

In both years, the five tillage passes performed in the conventional plots — over about 5 days after wheat chopping and before corn seeding — reduced the SVWC in the soil's top 5 inches (13 centimeters) (table 1). The reduction was 7.9% in 2009 and 8.1% in 2010; the SVWC in the no-tillage plots remained unchanged. When the SVWC was recorded in the top 8 inches of the soil in 2010, we found it was reduced by 9.8%, or 0.77 inch, in the tilled plots. Extrapolating the reduction in the top 5 inches to a 1-foot depth, which more closely matches the actual depth of tillage, suggests that the soil water losses from tillage might have been 0.93 inch (2.4 centimeters) in 2009 and 0.96 inch (2.4 centimeters) in 2010.

TABLE 1. Soil volumetric water content at depths of 0–5 and 0–8 inches in conventional tillage plots (before and after tillage) and no-tillage plots, 2009 and 2010

In 2009 and 2010, the percentage residue cover (75% and 95%, respectively) in the no-tillage plots was many times higher than in the tilled plots (7.5% and 6.0%, respectively). Although no-tillage management eventually will improve the soil's water-holding characteristics, our studies had not been in place long enough to produce such a change. It is likely that the differences in SVWC between the tilled and no-tillage plots resulted from increased soil-water evaporation in the tilled plots relative to the no-tillage plots.

Impact of residues

In the residue studies, we applied wheat straw residue to a depth of about 4 inches (10 centimeters), which is comparable to application rates in other residue studies (Klocke et al. 2009; Unger and Parker 1976) and to levels of residue accumulation recently measured in sustained tomato and cotton conservation-tillage systems at the same research site and also in related corn and tomato conservation-tillage studies on the UC Davis campus (Mitchell et al. 2005). The soil coverage was over 95% in each of the three studies.

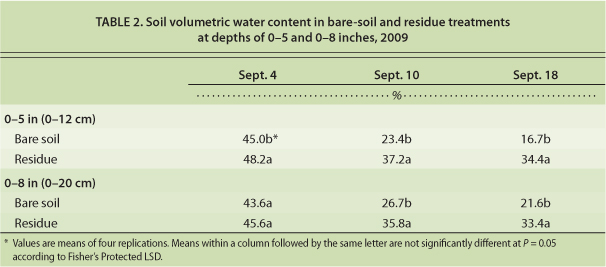

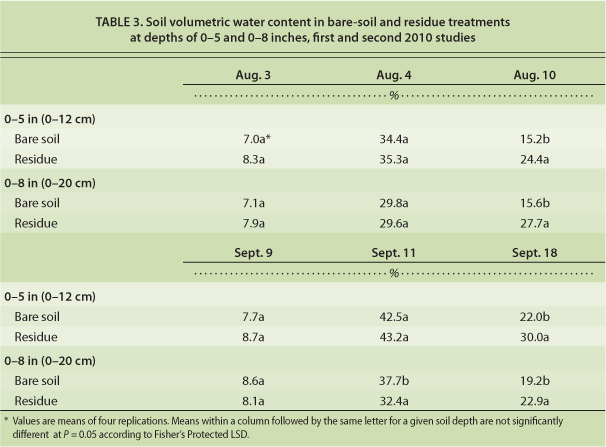

Residues reduced near-surface daily maximum soil temperatures, measured under the residues at 0.4 inch (1 centimeter) below the soil surface, by up to 20°F relative to bare-soil conditions during the second 2010 study (fig. 1). At the end of each of the three studies, our recordings showed that more water was retained in the soil under the residues than in the bare-soil plots (tables 2 and 3). The amount of retained water in the soil at the end of the studies could have been affected by evaporation losses, initial SVWC and percolation losses. Numerical simulation of water flow in the control volume — using HYDRUS 1-D software and data from the 2010 studies — indicated that the effect of percolation losses on the difference in evaporation losses between the residue and bare-soil plots was negligible (Singh et al. 2011).

Fig. 1. Maximum soil temperature (°F) at 1 centimeter below soil in bare-soil and residue-covered plots, 2010 evaporation study.

TABLE 2. Soil volumetric water content in bare-soil and residue treatments at depths of 0–5 and 0–8 inches, 2009

TABLE 3. Soil volumetric water content in bare-soil and residue treatments at depths of 0–5 and 0–8 inches, first and second 2010 studies

Differences in SVWC between the bare-soil and residue plots at the shallow depth (top 5 inches) were greatest in the 2009 study, when 0.83 inch (2.1 centimeters) more water was retained in the residue than in the bare-soil plots. In the first 2010 study, the difference was 0.43 inch (1.1 centimeter) and in the second 2010 trial, 0.38 inch (0.97 centimeter) (data not shown). A portion of this difference in SVWC between residue and bare-soil crops was caused by the different initial SVWC in the plots. Accounting for the initial SVWC, the change in SVWC due to evaporation was 0.68 inch (1.7 centimeters) in 2009, 0.37 inch (0.9 centimeter) in the first 2010 study and 0.33 inch (0.8 centimeter) in the second 2010 study. The particularly high number for the 2009 study was a result of the longer evaporation estimation period — 2 weeks rather than 7 to 8 days as in the other two studies; the change in SVWC for 1 week during the 2009 study was 0.50 inch (1.3 centimeters). The changes in SVWC between treatments at the greater depth (top 8 inches) ranged from 0.33 inch (0.8 centimeter) to 0.89 inch (2.3 centimeters) when differences in initial SVWC were accounted for.

As shown in other studies, the evaporation rate from bare soil after initial wetting is greater than from soil under residues. Residues shield the soil surface from solar radiation. Likewise, air movement at the soil surface is reduced under residues, resulting in a lower evaporation rate (van Donk et al. 2010). However, if the soil under residues is not rewetted by irrigation or rainfall, evaporation will continue and after many days can exceed that from bare soil.

In our studies, about 0.06 to 0.08 inch (0.15 to 0.2 centimeter) of the initial applied water was retained in the residue itself (fig. 2). This water, however, almost completely evaporated within about 2 days. These recordings match quite closely the results of studies in Nebraska, where 0.08 to 0.1 inch (0.2 to 0.3 centimeter) of water evaporated from residue after wetting events (van Donk et al. 2010). They indicate that evaporation losses from residues can be significant, particularly if irrigation or rainfall is light and frequent. Evaporation of 0.1 inch from an 0.5-inch (1.3-centimeter) application is a 20% loss, which is significant (van Donk et al. 2010). Heavier or less-frequent irrigations would be more effective in decreasing the proportional water loss from residues; however, concerns about runoff at high application rates may limit an irrigator's option to do that. In this regard, no-tillage offers an advantage: sustained no-tillage allows higher irrigation rates before runoff, because changes in soil structure and porosity result in higher infiltration rates (Pryor 2006).

The water evaporation rate from plots with residue (example at right) was consistently lower than from bare-soil plots (left) after overhead irrigation, in Five Points.

Water conservation

The general finding that residue cover tends to reduce soil water evaporation relative to bare soil has been consistently shown in a wide range of studies (Crovetto 1996; Klocke et al. 2009; Unger and Parker 1976; van Donk et al. 2010). The water conservation value of residues, however, remains controversial for a number of reasons (van Donk et al. 2010). In some U.S. regions, the harvest of residues for animal feed or as a source of cellulose for domestic biofuel production is increasing. Because maintaining residues has long been a conservation goal and a primary means for reducing erosion, research is now under way in these areas to evaluate the impacts of crop residue removal and develop recommendations for sustainable removal rates (Andrews 2006) and to better quantify both the agronomic and economic effects of residues on components of the soil water balance (van Donk et al. 2010).

Predicting or projecting the season-long impacts of residue cover relative to bare soil is complicated and depends on a number of interacting factors, including soil type, planting date, crop type, crop spacing, irrigation frequency and potential evapotranspiration. Work by Klocke et al. (2009) in Kansas suggested that residues may reduce energy-limited evaporation by 50% to 65% compared with evaporation from bare soil with no shading.

Our study is limited because we did not have a crop growing in the field when the measurements were taken. To compare our findings with recent similar studies that have included a transpiring crop, we estimated the longer-term impacts of having residues in a field relative to bare soil using data from our study and the following assumptions: (1) bare soil in our three studies evaporated about 84% more water than the soil with residues; and (2) for a typical summer crop produced in the Five Points region, evapotranspiration is about 30 inches.

We used two different data sources to estimate the longer-term water conservation potential of residue-covered versus bare soil. Data from Garden City, Kansas, indicated that evaporation was about 30% of evapotranspiration for a center-pivot-irrigated corn crop (Klocke et al. 2009). In addition, unpublished data from B. R. Hanson suggested that evaporation on furrow-irrigated tomatoes in California as a percentage of evapotranspiration is more like 15%. Under these two scenarios, an 84% reduction in evaporation under residues would correspond to 2.1 inches (5.3 centimeters) more water lost from bare soil than from under residues if evaporation were 15% of evapotranspiration, and 4.1 inches (10.4 centimeters) if evaporation were 30% of evapotranspiration. This extrapolation is remarkably close to the 3.5 to 4.1 inches (9.0 to 12.4 centimeters) of water savings from leaving residues on cornfields in west-central Nebraska (van Donk et al. 2010) and the 2.9 inches (7.5 centimeters) of water savings in Nebraska on irrigated cornfields with growing-season crop residues (Klocke et al. 2009).

In Five Points, soil is disked to incorporate residues — the conventional practice. Transitioning to reduced-tillage practices could significantly improve water use efficiency in California agriculture.

Prospects for California

Improving the water use efficiency of crop production by increasing the amount of water that is transpired by a crop relative to the amount that is evaporated by the soil has been identified as a management goal for California agriculture (Burt et al. 2002; Hsiao and Xu 2005). Transitioning from tillage and residue management practices used in California today to high-residue, no-tillage practices may partially accomplish this goal, according to our studies and similar recently published studies in Nebraska and Texas. In our studies, coupling no-tillage with high-residue preservation practices could reduce soil water evaporative losses during the summer season by about 4 inches (10.2 centimeters), or 13%, assuming a seasonal evapotranspiration demand of 30 inches. In Texas, a study of strip-till cotton grown in wheat residues, compared to cotton under conventional tillage, showed decreased soil water evaporation, increased crop transpiration and an increase in water use efficiency of 37% (Lascano et al. 1994).

However, a number of practical factors will need to be addressed before any wholesale transformation to no-tillage, residue-preserving production can be envisioned in California; these include the relative ease with which a farm's existing cropping mix might be converted to no-till, the need for and cost of new equipment and the learning curve for new management practices. Also, more research is needed on water balance and crop productivity under no-tillage and high-residue field conditions.

Certain California cropping systems, such as dairy silage and small grain rotations, may initially be more amenable to being converted to no-tillage and to maintaining sufficient residue amounts than others. Surveys conducted by the Conservation Agriculture Systems Initiative, for instance, have documented that high residue levels are achieved in sustained no-tillage and strip-tillage dairy silage fields. Long-term studies with conservation tillage and cover-cropped tomato and cotton rotations in Five Points, and conservation-tillage corn and tomato in Davis, have also demonstrated the ability to maintain high residue levels while sustaining productivity (Mitchell et al. 2005; Mitchell et al. in press).

The use of cover crops to provide relatively high surface residue levels has also been tried commercially in tomato fields in the western San Joaquin Valley in recent years. Transitioning to such management systems, however, has required considerable planning, know-how and persistence. The reductions in soil water evaporation that have been shown here add to the list of benefits of conservation-tillage systems for California producers (Mitchell et al. 2009; Mitchell et al. in press).

In Firebaugh, fresh-market tomatoes will be planted directly into a triticale cover crop that has been treated with herbicides.