All Issues

Data parties engage 4-H volunteers in data interpretation, strengthening camp programs and evaluation process

Publication Information

California Agriculture 75(1):14-19. https://doi.org/10.3733/ca.2021a0005

Published online March 10, 2021

NALT Keywords

Abstract

Participatory evaluation is a form of citizen science that brings program stakeholders into partnership with researchers to increase the understanding and value that evaluation provides. For the last four years, 4-H volunteers and staff have joined academics to assess the impact of the California 4-H camping program on youth and teen leaders in areas such as responsibility, confidence and leadership. Volunteers and nonacademic staff in the field informed the design of this multiyear impact study, collected data and engaged in data interpretation through “data parties.” In a follow-up evaluation of the data parties, we found that those who participated reported deeper understanding of and buy-in to the data. Participants also provided the research team insights into findings. By detailing the California 4-H Camp Evaluation case study, this paper describes the mutual benefits that accrue to researchers and volunteers when, through data parties, they investigate findings together.

Full text

Data parties are a form of participatory evaluation that focuses on the data analysis and interpretation portion of the research process (Lewis et al. 2019). A data party gathers stakeholders to analyze or interpret collected data, or both (Franz 2013). Though data parties are not a new idea, few articles have reported the benefits and outcomes of such events. Based on our own data parties, participants have found them to be a valuable tool that “breaks down” data into manageable pieces of information and allows stakeholders to process the evaluation findings to generate ideas for program improvement (Lewis et al. 2019). For participants, data parties can create a sense of ownership regarding both the data and the process (Fetterman 2001). Benefits also accrue to the researchers as they engage stakeholders in data interpretation, though these benefits are less documented.

The public can participate to varying degrees and in varying ways in citizen science research. The definition of citizen science is multifaceted and in a state of flux (Eitzel et al. 2017), but here in the United States — especially in the field of ecology — we often think of citizen science as a practice in which people contribute observations or efforts to the scientific work of professional scientists, especially as a way to expand data collection (Bonney et al. 2009; Shirk et al. 2012). Shirk et al. (2012) define “public participation in scientific research” as “intentional collaborations in which members of the public engage in the process of research to generate new science-based knowledge.” They present models that outline various degrees and types of citizen involvement in the scientific process. As most broadly defined, citizen science includes “projects in which volunteers partner with scientists to answer real-world questions” (Cornell Lab of Ornithology 2019). When we think of citizen science in this context — as community members and scientists working together to answer questions of mutual interest — we see its application not only in the physical sciences but in the social sciences as well.

Participatory evaluation — the broad focus of this research — is, by definition, a type of citizen science. In participatory evaluation, researchers collaborate with individuals who have a vested interest in the program or project being evaluated (Cousins and Whitmore 1998); such individuals can include staff, participants, organizations and funders. Participatory evaluation is beneficial not only for community members and stakeholders but researchers as well (see Flicker 2008 for an example of benefits to all involved in participatory work). Engaging stakeholders in evaluation data can be difficult, yet we know that understanding and utilizing data are critical to improving program outcomes and practices. Evaluation is useful only insofar as it is understood, embraced and acted upon by those who can affect what happens in a program. This paper describes evaluation of the California 4-H Youth Development Program's (California 4-H) statewide camp evaluation and the use of data parties — a participatory evaluation strategy — as a means of engaging stakeholders (staff members, along with teen and adult volunteers) in data analysis and camp improvement. It explores outcomes and potential impacts when researchers partner with volunteers to better understand the statewide 4-H camp program.

Youth and adults, often paired for the gallery walk, discuss their thoughts and perspectives on data from 4-H campers and teenage camp staff. Data party participants reported a greater understanding of and buy-in to the data.

Involving stakeholders in program evaluation entails multiple challenges, potentially including participants' lack of interest or their belief that they are ill-equipped to analyze or interpret data. Participatory evaluation involves stakeholders in a meaningful way, such that they are included in the research and evaluation either from the start or at various points throughout the process (Cousins and Whitmore 1998; Patton 2008). By its nature, Cooperative Extension research lends itself well to participatory research; it is amenable to creating an environment in which stakeholder voices help guide the research process (Ashton et al. 2010; Franz 2013; Havercamp et al. 2003; Tritz 2014).

Interpreting data through a data party

California 4-H annually hosts approximately 25 resident camps, each five to seven days long. The camps are locally administered by volunteers and planned and delivered by teenagers. In 2016, California 4-H began the process of evaluating the statewide 4-H camp program to measure youth outcomes and improve camp programs.

The California 4-H evaluation coordinator (one of this paper's authors) approached the 4-H Camping Advisory Committee — composed of UC academics, staff, 4-H volunteers and teenagers — to design and implement a statewide evaluation of the 4-H camping program. The committee identified outcomes to measure, including outcomes for campers (generally ages 9–13) and teen staff (ages 14–18). The evaluator, working in partnership with the committee, developed two youth surveys to measure the identified outcomes. One survey, which focused on both campers and teen staff, measured confidence, responsibility, friendship skills and affinity for nature. A second survey, focusing on teen staff, assessed leadership skills and youth-adult partnership. See Lewis et al. (2018) for details on the development of these tools. The 4-H Camping Advisory Committee and the evaluation coordinator realized that it would be important to share the evaluation results with the camps involved in the study and therefore decided to conduct data parties. The UC Davis Institutional Review Board approved the evaluation.

Camps sent teams of three to six people to the data parties. All were leaders in their camp programs, yet brought differing perspectives as adult volunteer directors, teen leaders or 4-H professional staff. Here, members of a team create their camp improvement plan.

Participants

Nine 4-H camps participated in the statewide evaluation during the summer of 2016 and 12 camps did so in 2017. Two daylong data parties were held, one in each study year, after the conclusion of the camp season. We invited all camps included in the study to the sessions, emphasizing that individuals in key leadership roles (for example, adult camp administrators, youth directors and 4-H professional staff) should attend. Seven of the nine camps participated in the first data party and five of 12 participated in the second (table 1). Though the individuals who attended were engaged in participatory evaluation, we use the term participants to refer to stakeholders with leadership roles at camps and evaluator to refer to the person or persons who took the lead on data analysis and developing the tools for the data party.

Format of data parties

A data party can consist of multiple activities and tools — such as a gallery walk, data place mats and data dashboards (see Lewis et al. 2019 for examples). At the data parties described in this research, we presented data derived from surveys in an accessible format, creating a series of posters and place mats that each contained a digestible amount of information on a particular topic, such as mean difference between campers and teen leaders on target outcomes; gender differences; or before-and-after differences in teen leadership skills. Each data-party day included the following activities:

-

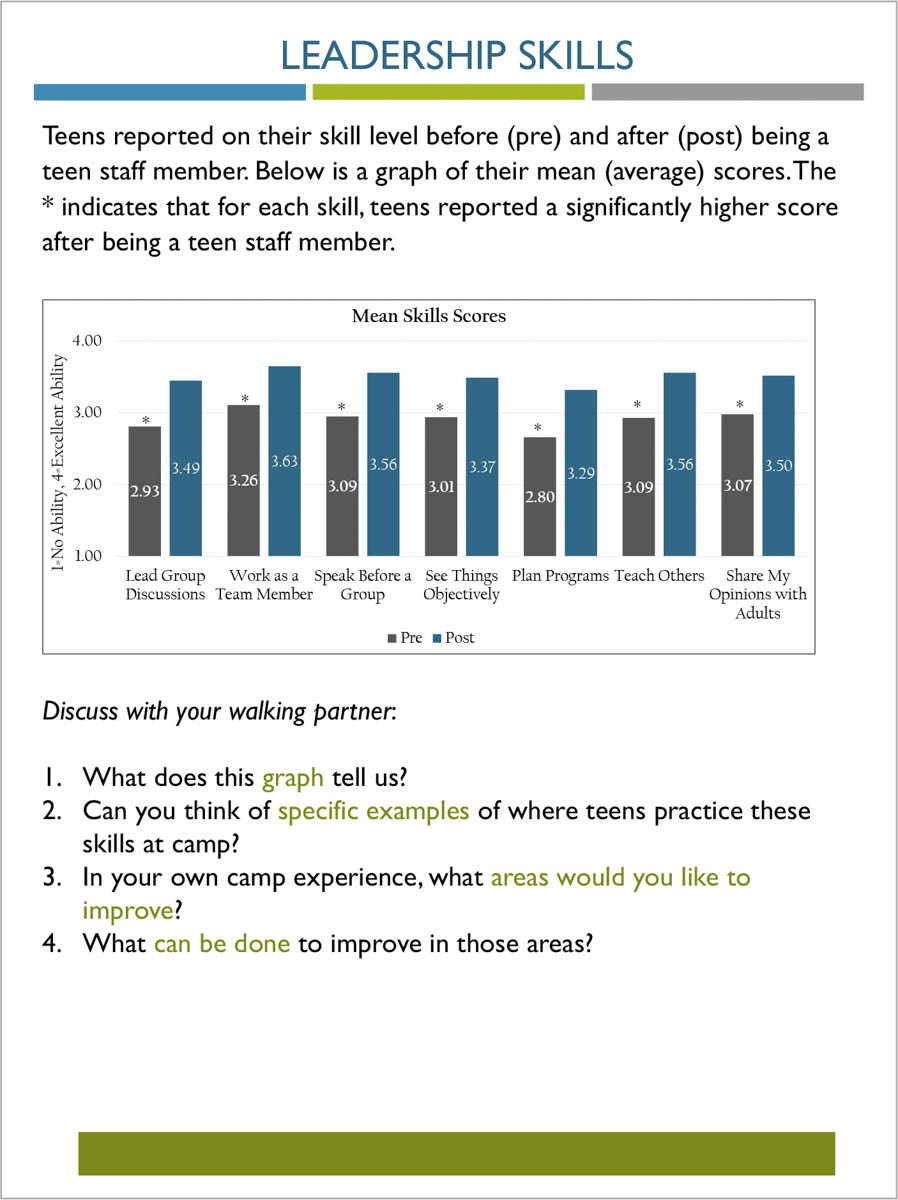

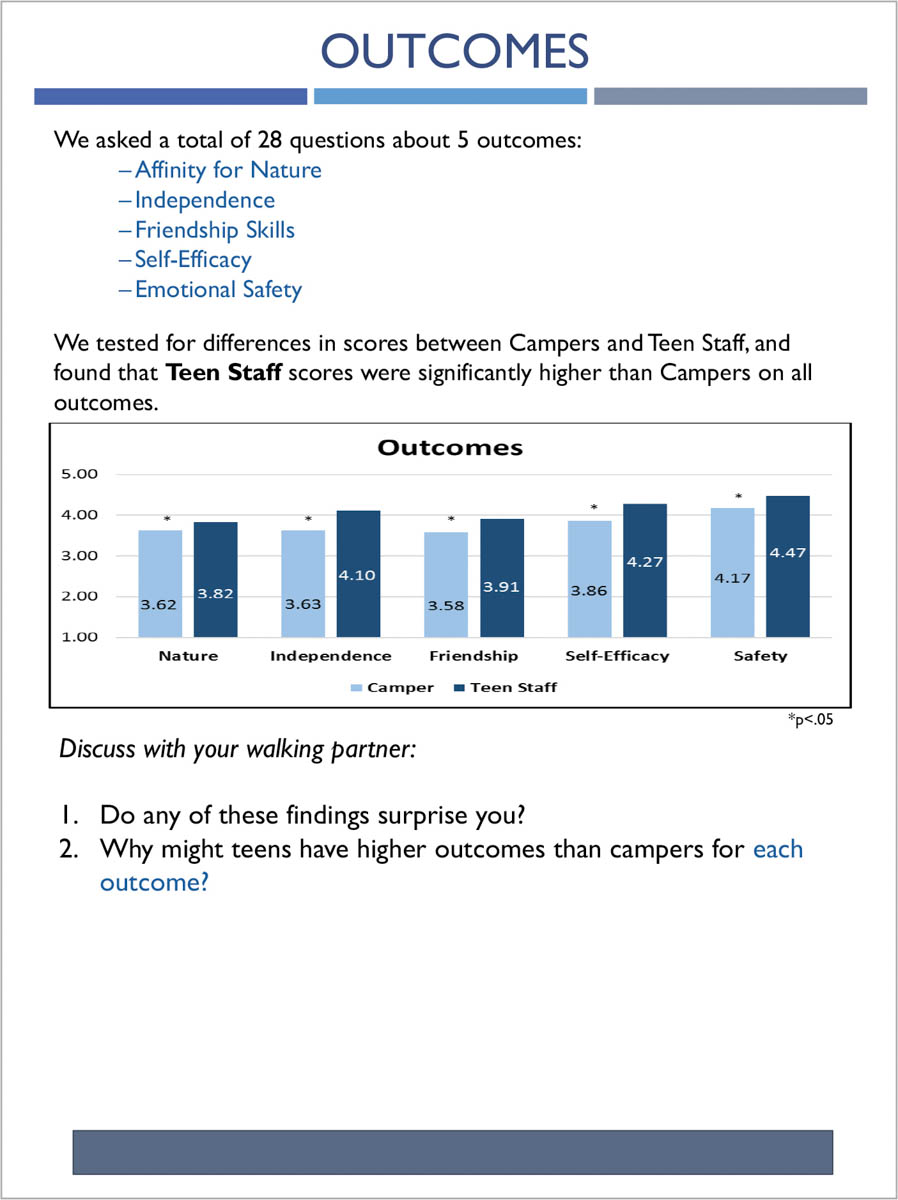

A Gallery Walk in which pairs of participants from different camps viewed eight to 10 posters that featured statewide data. Participants then discussed their observations about the data and the patterns they recognized in it. Figures 1 and 2 show example posters.

-

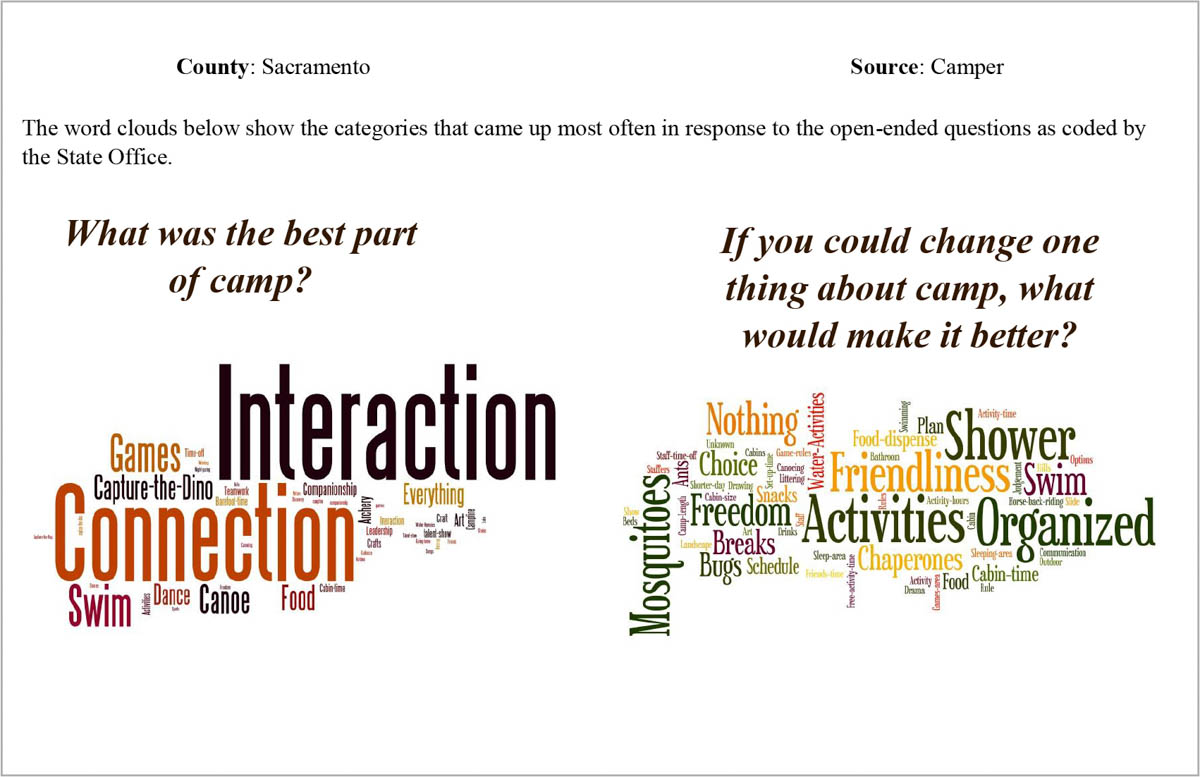

Review of data place mats, which contained graphs and qualitative word clouds that represented survey responses for individual camps in the study; afterward, participants shared reflections on the place mats with participants from their own camps and other camps. Figure 3 shows an example of a data place mat.

-

Introduction of tools to share survey findings. These tools helped participants clarify how they intended to share findings, and with whom.

Participants explore a series of posters that present statewide camp evaluation findings on a Gallery Walk. Group work is central to the data party, and participants value investigating and discussing data and generating ideas with peers and 4-H staff.

Participant assessment

We administered online follow-up surveys of all data-party participants nine months after the 2017 data session and 18 months after the 2016 session. Through open-ended questions, we asked participants what insights they had gained from the analysis session and how they had utilized data and learnings. Nine data-party participants completed the survey; three had attended the 2016 session only, two had attended the 2017 session only and four had attended both. Three were adult volunteers and six were professional staff. Using a five-point Likert scale, participants rated the usefulness of various data-sharing strategies, and also rated their understanding and ownership of findings and their ability to communicate findings. A copy of the assessment is in the online technical appendix.

The participant experience

Participants provided positive reports on the data party, with 100 percent of respondents saying they had gained new insights through the sessions. The majority agreed that the process led to greater understanding of the camp data and, ultimately, improvements in their camp programs (see fig. 4).

FIG. 4. Outcomes of the data-party experience as reported by participants. Scale is: (1) strongly disagree, (2) somewhat disagree, (3) neither agree nor disagree, (4) somewhat agree and (5) strongly agree. A copy of the assessment is in the online technical appendix. SD = standard deviation, bars represent standard errors.

Researchers noted high levels of engagement among participants as they explored data within and across different camps. Participants asked questions, readily contributed to the facilitated discussions and were curious to know why their camps may have scored higher or lower than other camps on specific measures. Investigating the data brought to light issues in their programs that they hadn't considered and produced ideas about how to strengthen the programs. Survey responses verify these observations:

“It was interesting to see the remarks by the teens and campers. I think those remarks gave a lot of insight into how they see camp, and that in turn sparked ideas.”

“The affinity-for-nature scale helped me to think about how to better support our 4-H camps with environmental education.”

Participants cited various ways in which they utilized the findings presented at the data parties. These included modifying staff training, sharing findings with camp staff or 4-H management boards and making specific programmatic improvements, as cited below:

“Comments in regards to nature were especially helpful when planning our camp program this year. In training, it was helpful to see where teen staff needed support as well as putting a name to some of the skills we taught.”

“We discussed [our camp's data] with our county management board and with our camp staff. It gave our camp greater importance to board members who don't value camp. We looked at things we needed to focus on when planning our camp.”

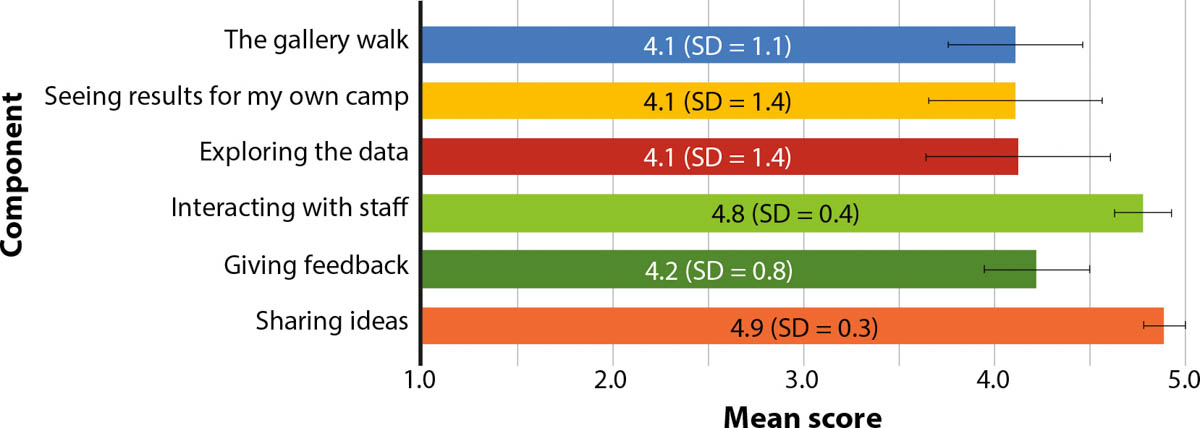

Participants valued interactions with 4-H academics knowledgeable about the data, as well as interactions with peers (see fig. 5). As the comments below demonstrate, they derived considerable benefit from interactions with others whose camps were part of the study.

FIG. 5. Usefulness ratings of data-party components as reported by participants (n = 9). Scale is: (1) not at all useful (2), slightly useful, (3) moderately useful, (4) very useful and (5) extremely useful. A copy of the assessment is in the appendix. SD = standard deviation, bars represent standard errors.

“We received valuable feedback from not just the data, but from dialogue with other 4-H camp program leaders. It helps to keep us focused on what will make our camp program the best it can be.”

“It was helpful to see that other camps struggle in some of the same areas as our camp. Some examples are: working with diversity and inclusion of all, and outdoor education.”

Two-thirds of respondents indicated that their camps had created plans for improvement based on the data. Almost all strongly agreed that the data had led to improvements in their programs.

Benefits for camps and researchers

A main focus of participatory evaluation is that, when stakeholders become involved in the evaluation process, they perceive greater relevance in and take greater ownership of evaluations, making them more useful to those involved with the program (Cousins and Whitmore 1998; Patton 2008). Findings from the statewide camp evaluation support this idea. Further, inviting stakeholders — the volunteers, staff and youth involved in 4-H camps — to interpret the findings also benefitted the researchers conducting the evaluation. We summarize benefits for both groups below.

Insights into the data

Asking stakeholders to analyze and interpret their own data increased the evaluator's understanding of the meaning of some responses. For example, many qualitative responses to survey questions referred to camp traditions that were unfamiliar to the evaluator. It was difficult for the evaluator to effectively code the qualitative data without gaining insight from stakeholders about what these references meant. Additionally, stakeholders demonstrated robust understanding when they were asked not simply to embrace findings but to look for patterns, explore what surprised them, ask their own questions and come to their own conclusions. Stakeholders created their own knowledge, leading to deeper learning (Piaget 1971).

Sense of partnership between UC academics and key program stakeholders

Evaluation can be seen as a measuring stick and therefore people being assessed may approach it guardedly. As Franz reported (2013), the methodology of including stakeholders in analysis may contribute to community-building between stakeholders and evaluators. The 4-H camp assessment built a bridge between UC and volunteers, increasing communication and trust.

Refinement of data collection instruments

Through the data parties, evaluators received feedback on the survey instruments. Though the California 4-H Camp Advisory Committee had provided input on development of the surveys, several staff and volunteers felt — after the first year of data collection, and reflecting on responses that youth gave — that one of the measures did not capture the targeted outcome. At a data party, the evaluator was able to discuss ideas with staff and volunteers, which allowed refinements in the survey to better fit the 4-H camp context.

Greater ownership of the findings

Sometimes in amassed data, individuals may not see findings as representative of their experience. But data-party participants, when empowered to make meaning from their own data, not only gained greater understanding of the findings but also took greater ownership of them. Participants' sense of control and destiny shifted. The external evaluator was no longer in sole control of the findings and camps became more likely to embrace program improvement.

Limitations

The data-party assessment described here is not without limitations. Our sample size is small. The sample could be biased because we may have received responses only from individuals whose experience at the data party had been positive. We were unsure how effective this method of sharing data would be, and we did not formally evaluate the data parties when they occurred. We also did not collect any information from stakeholders who did not attend a data party. We do not know why those stakeholders did not attend — whether because they did not understand the purpose of the data party, lacked interest in the evaluation or found the date or location of the data party inconvenient. Finally, no teen staff members responded to the survey. Teens play a distinct role as members of camp staff, and no doubt their experience of data parties is distinct as well. The perspective of teens would be useful for improving the researcher-practitioner relationship that is central to citizen science. Despite these limitations, the participants and evaluators who engaged in the data parties did report several benefits, as outlined above. Continued interest in holding data parties for the camp evaluation, and in increasing use of data parties for other California 4-H research projects, supports our conclusion that this form of participatory evaluation is an excellent tool for involving stakeholders in the research process.

Future directions

The success of the data party in the 4-H Camp Evaluation Study has led to continued use of this tool as a vehicle to promote understanding and engagement. We have successfully replicated the data-party model with other stakeholder groups, including as a means to share 4-H Youth Retention Study data with 4-H volunteers. Since the data-party format is sometimes discussed as a way to share information with stakeholders, we developed a tool kit to assist others in constructing their own data parties. (The tool kit and templates for posters and place mats are available at bit.ly/data_party.)

Conclusion

Most researchers are not trained or encouraged to share authority with individuals inexperienced in the research process — especially in the realm of evaluation, where distance is equated with objectivity. Yet engaging those closest to data in analysis and interpretation allows practitioners and researchers alike to gain more nuanced insight. Involving stakeholders in understanding data fosters stronger, data-driven decisions about program improvement. Furthermore, it may increase stakeholder interest in the evaluation process. Since 2016, participation in the camp study has steadily grown (from nine to 22 sites) — and camps, once involved in the study, have been likely to continue the yearly evaluation. While we have no empirical evidence that data parties lead to an increase in study sites, the growth in participation may indicate that participants see value in the evaluation process (Patton 2008) when it includes a data party. For these reasons, the partnership between volunteers and researchers — a partnership enhanced by the use of data parties — has led to deeper understanding of the California 4-H camping program and greater commitment to program improvement.